Weighted LAD-LASSO (WLAD-LASSO) method will resist the heavy-tailedĮrrors and outliers in explanatory variables. They proposed to use weighted-LASSO with integrated relevantĮxternal information on the covariates to guide the selection towardsĪrslan (2012) found that, compared with the LAD-LASSO method, the Regression coefficient for variable j, is subject to a larger penaltyĪnd therefore is less likely to be included in the model, and vice versa (2011) found that a large value of Wj, the Robust-LASSO-estimator that is not sensitive to outliers, heavy-tailedīergersen et al. Since the LASSO method minimizes the sum of squared residualĮrrors, even though the least absolute deviation (LAD) estimator is anĪlternative to the OLS estimate, Jung (2011) proposed a Present the weighted-LASSO method to infer the parameters of aįirst-order vector autoregressive model that describes time courseĮxpression data generated by directed gene-to-gene regulation networks. That describes classes of connectivity between the variables. (2010) suggest that owns an internal structure LASSO/LARS it approximates the logistic regression loss by aĬharbonnier et al. Keerthi and Shevade (2007) proposed a fast tracking algorithm for Minimizing the following objective function : Tuning parameters for different regression coefficients. Zou (2006) introduced the adaptive-LASSO by using the different It also satisfies the solution of LASSO with the same currentĪpproach direction and ensures the optimal results and algorithm Improved-LARS algorithm regresses stepwise each path keeps theĬorrelation between current residual individual and all the variables Sign of regression coefficient p and solve LASSO better. They proposed improved-LARS algorithm (2004) to eliminate the opposite Proposed the LARS algorithm to support the solution of LASSO. As a kind ofĬompression estimates, the LASSO method has higher detection accuracyĪnd better parameter convergence consistency. Values of regression coefficient p is less than a constant by constructĪ penalty function to shrinkage coefficient. Square of the residuals with the constraint that the sum of the absolute The idea of this method is minimizing the Nonnegative Garrote (Breiman, 1995), Tibshirani proposed one new Inspired by the ridge regression (Frank and Friedman, 1993) and LASSO is an estimate method which can simplify the index set. On the selection of variables and values of regression coefficients. Statistical techniques the accuracy of that analysis mainly depends Linear regression analysis is the most widely used of all Prediction precision and accuracy of the model through selecting The largest explanatory ability to the response, that is, improving the In the process of modeling, we need to find the attribute set which has

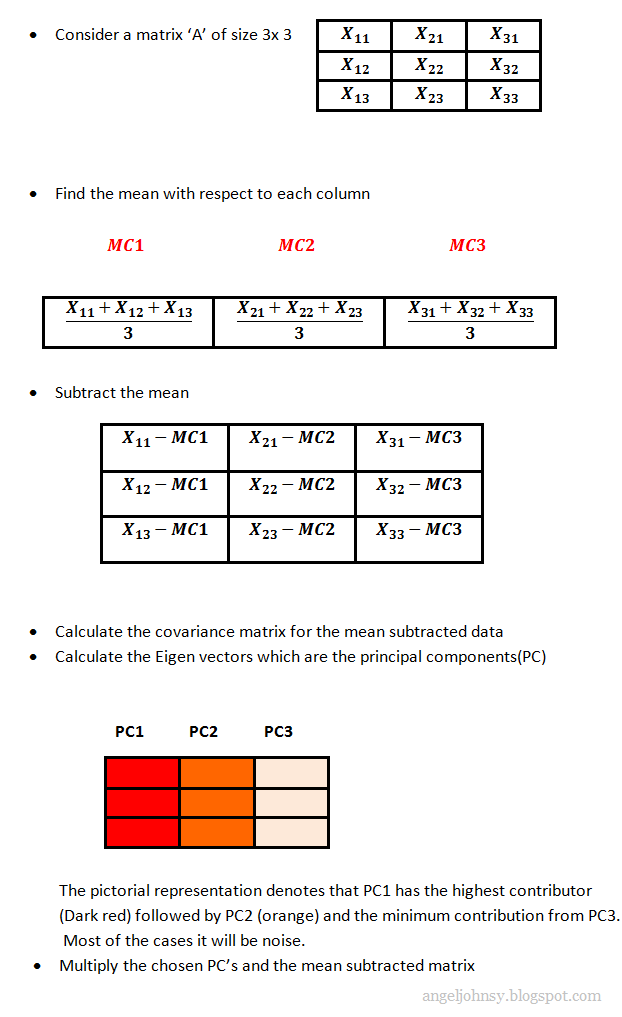

Variables (attribute set) chosen are, the less the model error is. At the beginning of the modeling, generally, the more Linear model, model error usually is a result of the lack of key Information from mass data by mathematical statistics model. Gained much attention in academia regarding how to mine useful Retrieved from ĭata mining has shown its charm in the era of big data it has APA style: Method for Solving LASSO Problem Based on Multidimensional Weight.Method for Solving LASSO Problem Based on Multidimensional Weight." Retrieved from MLA style: "Method for Solving LASSO Problem Based on Multidimensional Weight." The Free Library.We illustrate the method with the analysis of a real dataset con-* taining socioeconomic data and the computational results for nine datasets of increasing dimension with up to 16,000 variables. We propose a simple and efficient algorithm that uses simple forward selection to select variables and the power method to compute eigenvectors. We also show that this approach is strictly related to least squares SPCA by providing a novel interpretation for the latter. We show that these components explain more than a predetermined percentage of the variance explained by the principal components. We propose a practical SPCA method in which sparse components are computed by projecting the full principal components onto a subset of the variables. Sparse components are more interpretable than standard principal components as they identify few key features of a dataset. Sparse principal components analysis (SPCA) methods approximate principal components with combinations of few of the observed variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed